In routine water and wastewater testing, “accuracy” is often discussed as if it were purely an instrument issue. Many laboratories assume that higher optical resolution, more advanced hardware, and more expensive instruments will naturally produce more accurate results.

From an engineering and laboratory management perspective, this assumption is fundamentally flawed. In routine water and wastewater testing, photometer accuracy is primarily determined by sampling quality, digestion control, reaction consistency, calibration discipline, and operator dependency. Optical performance only becomes critical when it fails to meet method-defined requirements. Understanding what truly affects accuracy is essential for instrument selection, laboratory procedure design, and long-term data reliability.

This article aims to clarify which factors genuinely matter for photometer accuracy in routine testing—and which factors are far less important than commonly believed.

1. Accuracy in Routine Testing: An Engineering Definition

In routine water analysis, “accuracy” does not mean achieving the highest theoretical precision under ideal laboratory conditions. Instead, its practical engineering definition is:

l Results are sufficient to support process control and regulatory decisions

l Stable and consistent correlation with standard methods

l Good repeatability across different operators, shifts, and days

l Traceable, defensible data suitable for audits

From this perspective, accuracy is a system-level outcome, not a single technical specification. In other words, in routine laboratories, accuracy should be evaluated as process reliability over time, not peak instrument performance under ideal conditions

2. Optical Performance: A Necessary Foundation, Rarely the Bottleneck

Photometer accuracy begins with basic optical requirements:

l A stable light source

l Appropriate wavelength selection

l Acceptable photometer linearity

For modern photometer water quality analyzers, these requirements are typically met well beyond what standard methods demand. Once wavelength stability and linearity fall within method tolerances, further pursuit of “extreme” optical precision yields rapidly diminishing returns.

In routine testing:

l Measurement wavelengths are fixed by standard methods

l Full-spectrum scanning is unnecessary

l Optical resolution is rarely the limiting factor

Engineering conclusion: Optical performance is a prerequisite, but not the dominant driver of accuracy in routine photometer analysis. Optical limitations become relevant only when instruments fail to meet the wavelength accuracy, bandwidth, or linearity explicitly required by standardized methods. Beyond that threshold, further optical refinement does not translate into better routine accuracy.

3. Sample Representativeness: The Primary Source of Error

No photometer can correct errors introduced before the sample reaches the instrument.

Key risk points include:

u Inadequate mixing of heterogeneous wastewater samples

u Improper sampling location or timing

u Changes during storage (biodegradation, volatilization, oxidation)

u Improper preservation or delayed analysis

In practice, sample representativeness often accounts for the largest share of total measurement uncertainty. For industrial wastewater or high-solids samples, this contribution can reach 50–70%.

Engineering countermeasures:

ü Define clear sampling SOPs (location, depth, frequency)

ü Thoroughly homogenize samples before subsampling

ü Strictly follow preservation requirements (pH ≤ 2, 4 °C refrigeration)

ü Document sampling and preservation details for traceability

Engineering insight: Many laboratories attempt to improve accuracy by upgrading instruments, while leaving sampling procedures unchanged. From an engineering perspective, this approach addresses a secondary factor while ignoring the primary error source. Improvements at this stage (like standardizing sampling procedures and strengthening personnel training) often yield far greater accuracy gains than upgrading the instrument itself.

4. Pretreatment and Digestion Control: The Decisive Factor

For parameters requiring digestion, such as COD, total phosphorus, total nitrogen, and permanganate index—pretreatment quality is critical.

Accuracy depends on:

l Digestion temperature stability (well-to-well variation ≤ ±2 °C)

l Strict digestion time control

l Accurate reagent composition and dosage

l Effective sealing to prevent losses or contamination

Small deviations (e.g., 5 °C lower temperature or 10 minutes shorter digestion) introduce systematic bias, not random noise. No advanced photometer can compensate for incomplete or inconsistent digestion.

Field data: More than 60% of COD nonconformities in monitoring stations were due to digestion issues, not photometer measurement errors.

Engineering countermeasures:

ü Regularly verify digestion temperature uniformity

ü Use calibrated timers for consistent digestion time

ü Include blanks and QC samples in every batch

ü Validate masking agents or dilution for complex matrices

Engineering insight: For digestion-based parameters, pretreatment quality typically contributes more to total uncertainty than photometer measurement itself.

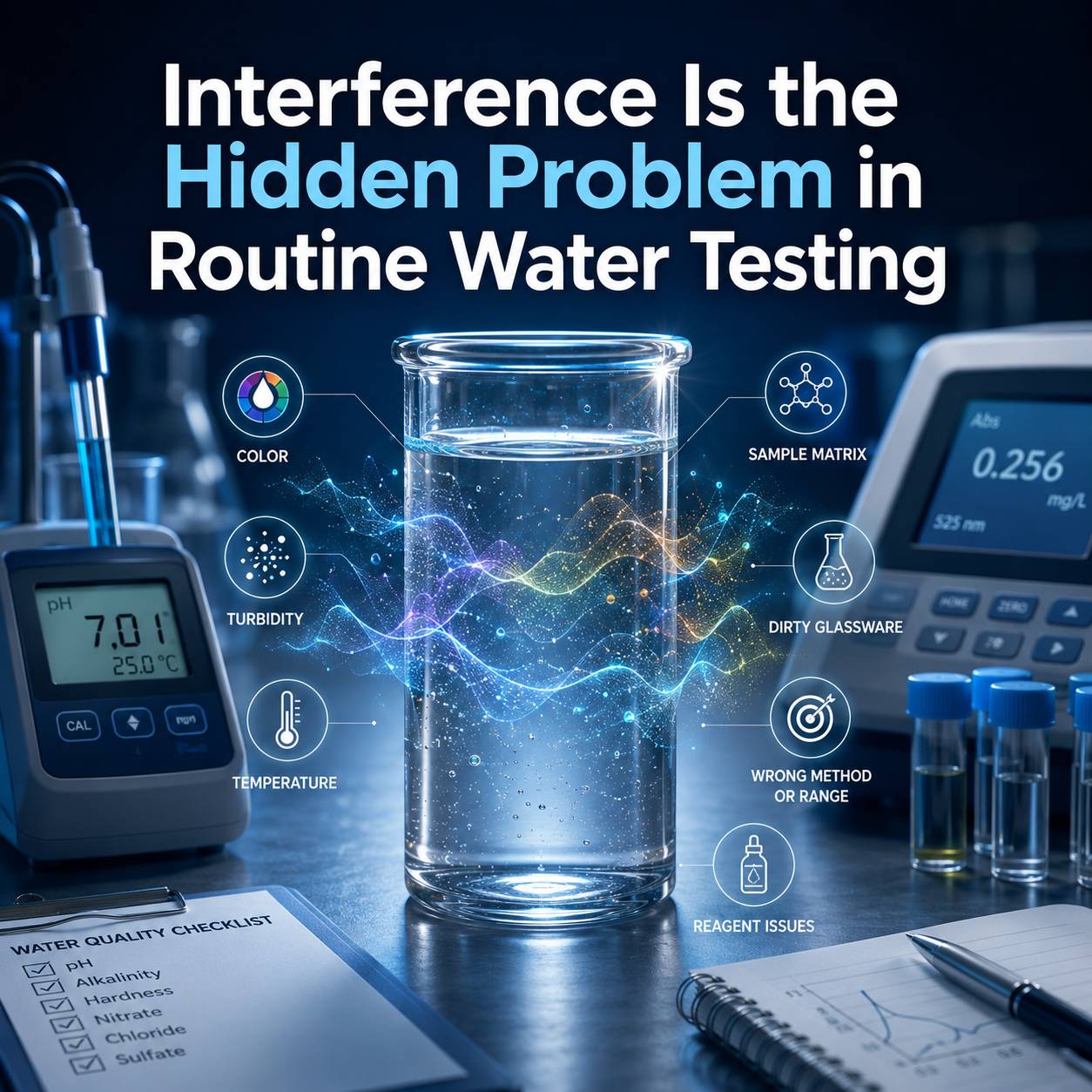

5. Reaction Control: Time, Temperature, and Consistency

Many color reactions are sensitive to:

l Reaction time (sometimes minute- or second-level precision)

l Ambient and sample temperature

l Mixing uniformity

l Reagent freshness

Manual timing and visual judgment are major sources of variability, especially in laboratories with frequent staff rotation. photometer water quality analyzer improve accuracy not through superior optics, but through workflow standardization, including built-in reaction timers and method-guided procedures.

Supporting data: Studies show that using built-in timers can reduce ammonia nitrogen inter-batch RSD from 8–12% to 3–5%.

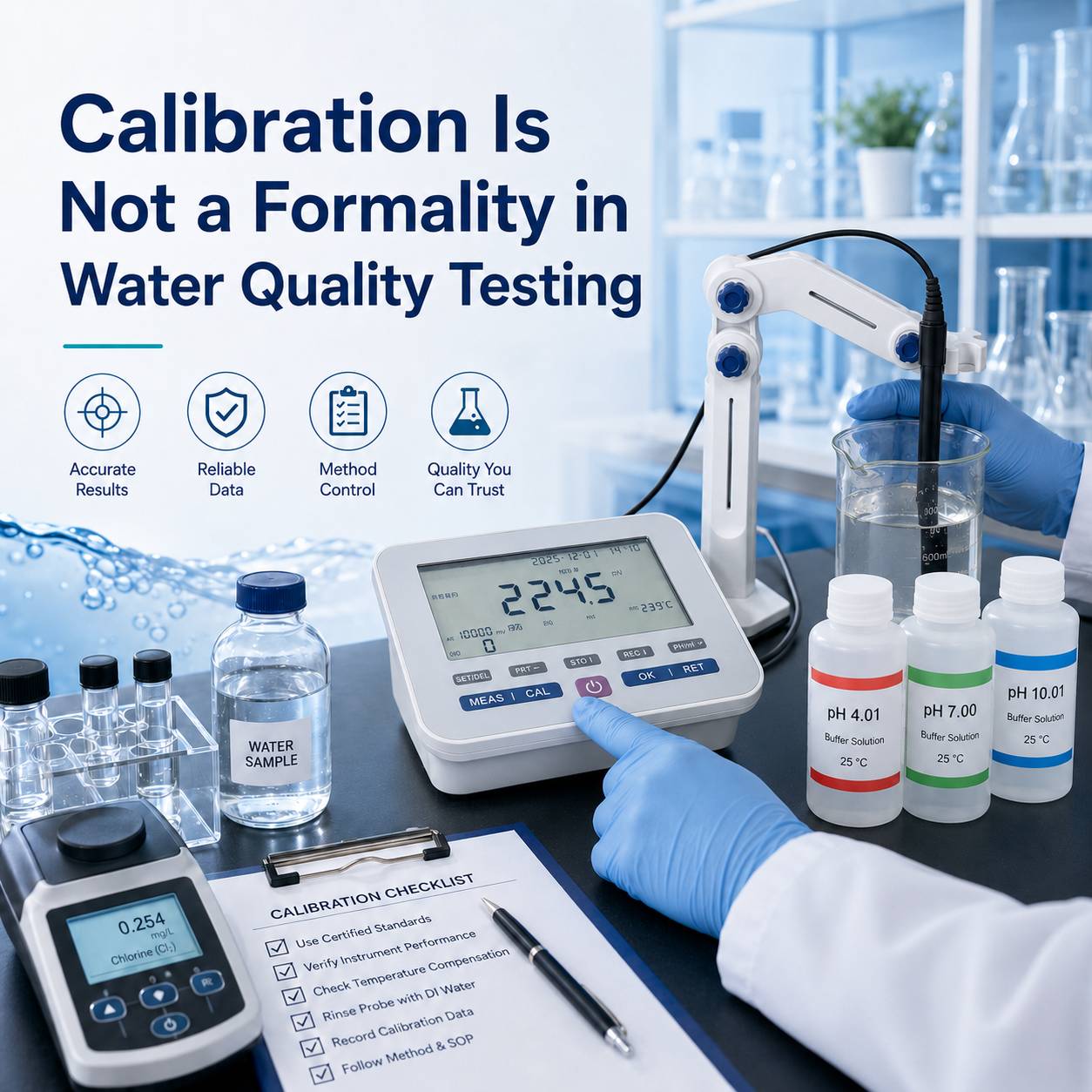

6. Calibration Curve Management: Discipline Over Mathematics

Calibration curves are not temporary tools, but core quality assets.

Accuracy depends on:

l Correct preparation of standard solutions

l Matrix matching when required

l Regular verification and drift correction

Even the most sophisticated curve-fitting algorithms are meaningless if:

u Standards are prepared incorrectly

u Instrument drift is not monitored

Best practices:

ü Verify curves with certified reference materials

ü Use “master curve + single-point correction” for high-frequency parameters

7. Operator Dependency: A Hidden Accuracy Risk

Common laboratory challenges include:

l Frequent staff turnover

l Mixed technical backgrounds

l High workload

Accuracy suffers when:

u Wavelengths are manually selected - Risk of misconfiguration (e.g., confusing the wavelength for high-range COD with that for low-range COD)

u Concentrations are calculated externally - calculation errors, improper rounding of significant figures

u Results are transcribed by hand - transcription errors, unit confusion

u Steps are skipped or forgotten - forgetting to add a certain reagent, forgetting to start the timer

Dedicated water photometers embed analytical logic into the instrument:

ü Method-bound wavelength control - e.g., After selecting the COD method, the instrument automatically switches to the corresponding wavelength, eliminating manual selection errors

ü Direct concentration readout - No external calculations are required, removing the calculation step

ü Automatic data storage with traceability - Results are automatically saved, including time, method, and operator information, eliminating transcription errors

Engineering conclusion: Reducing operator dependency is one of the most effective ways to improve routine accuracy. If an instrument meets method-defined optical requirements, further accuracy improvements should focus on sampling control, digestion consistency, reaction timing, and operator workflow—not on optical upgrades.

8. Quality Control: Accuracy Must Be Continuously Proven

Accuracy is not a one-time achievement. It must be verified through:

ü Reagent blanks - At least one per batch to correct the background value

ü Duplicate sample analysis - Randomly select no less than 10% of the samples for duplicate analysis to assess precision

ü Certified reference materials or spike recovery - Insert certified reference materials per batch or daily to assess accuracy

ü Regular method comparisons - Compare with authoritative laboratories or standard methods to verify system consistency

Instrument self-checks alone are insufficient. QC results must validate the entire analytical process.

Engineering recommendation: Enforce mandatory QC for every batch. Out-of-control QC invalidates all associated sample results.

9. Overestimated Factors in Routine Testing

Certain factors are often overstated in daily analysis:

Factor | Why It’s Overestimated | Engineering Reality |

Ultra-high optical resolution | Fixed-wavelength methods don’t need nm-level resolution | 1 nm vs 5 nm bandwidth rarely matters |

Full-spectrum scanning | Endpoint measurement is sufficient | Usage rate typically <5% |

Complex software features | Rarely used in routine work | Increases training burden |

Extreme detection limits | Wastewater concentrations are much higher | ppb-level limits add little value |

These features matter in R&D, but add minimal value in standardized routine testing.

10. Engineering Summary: What Really Determines Accuracy

Key factors influencing photometer accuracy (by importance):

Factor | Impact | Engineering Strategy |

Sample representativeness | ★★★★★ | Standardized sampling, training, homogenization |

Pretreatment & digestion | ★★★★★ | Verified digestion control, batch QC |

Reaction consistency | ★★★★☆ | Built-in timers, standardized workflow |

Calibration & QC discipline | ★★★★☆ | Regular verification, documented control |

Reduced operator dependency | ★★★☆☆ | Method-integrated photometers |

Optical performance | ★★☆☆☆ | Meet method requirements—no overdesign |

Core conclusion: Optical performance is necessary, but once method requirements are met, process control becomes the dominant factor.

Conclusion

In routine water analysis, photometer accuracy is not achieved by chasing the most advanced optical specifications. It is achieved by controlling the entire analytical workflow from sampling to reporting. When processes are standardized, pretreatment is controlled, and operator dependency is minimized, photometer water quality analyzers can deliver highly reliable, regulation-compliant data day after day.

In laboratory engineering practice, the essence of accuracy does not lie in how precise an instrument is, but in how reliably the entire system operates under actual working conditions. An instrument that is "good enough" but backed by excellent process design is far more capable of producing consistently accurate data than a "high-end" one with complicated operation.

For laboratories focused on routine wastewater monitoring, multi-parameter photometer analyzers designed around standardized methods, built-in timers, and predefined curves are often a better engineering fit than general-purpose spectrophotometers

Example: iWannaMP Multi-Parameter Photometer Water Quality Analyzer

+852 46135220

+852 46135220