In the field of water quality analysis, a common misconception is that “the more parameters you measure, the better the data will be.” Many professionals assume that adding parameters automatically leads to a more comprehensive understanding of water quality. In reality, this is often not the case. In practical water quality engineering, data quality is determined by parameter relevance, measurement accuracy, and decision usability, not by the total number of parameters measured.

This article provides an in-depth analysis of this issue from an engineer’s practical perspective.

1. Data Overload: Why “More” Can Be Counterproductive

Some modern water quality testing instruments often claim the ability to measure dozens or even hundreds of parameters from basic indicators like pH, turbidity, and dissolved oxygen to various metal ions, nutrients, and specific organic compounds. This easily creates the illusion that more measurements equal more complete data.

However, from a systems engineering and control theory perspective, indiscriminate data collection often reduces the signal-to-noise ratio. Every additional parameter increases data dimensionality, while the complexity of data processing, cleaning, and correlation analysis grows exponentially. Large volumes of weakly relevant data can obscure truly critical abnormal signals, causing engineers and analysts to lose direction in a “sea of data” and struggle to identify the features that genuinely reflect real water quality conditions.

From an engineering perspective, water quality data effectiveness can be simplified as:

Effective insight = relevant parameters × data accuracy ÷ analytical complexity

Increasing parameter count without improving relevance or accuracy often reduces overall insight.

The real engineering challenge is not what can be measured, but what should be measured to support decisions.

2. The Decisive Role of Context: There Is No “Universal Parameter Set”

A water body is essentially a complex chemical reactor, with background conditions, pollution sources, and self-purification capacities varying widely. A mountain stream, an urban lake, a deep groundwater well, and an industrial recirculating cooling system all face fundamentally different water quality challenges. Applying a standardized parameter set to every scenario reflects a lack of engineering specificity.

l Groundwater vs surface water: In deep groundwater, suspended solids are rarely a primary concern due to natural filtration by soil and rock. The focus should instead be on dissolved solids, hardness, iron, manganese, arsenic, and other geogenic parameters. Surface water, by contrast, requires attention to turbidity, algae, organic matter, ammonia nitrogen, and other indicators influenced by non-point source pollution.

l Process water specificity: In reverse osmosis pretreatment systems, engineers may focus primarily on silt density index (SDI), residual chlorine, and turbidity. In boiler feedwater systems, dissolved oxygen, hardness, pH, and conductivity are the critical parameters determining corrosion and scaling risks.

l Pollution source orientation: Downstream of industrial parks, monitoring characteristic pollutants (such as specific heavy metals or organic solvents) is far more valuable than routine general parameters. Measuring only basic indicators cannot identify pollution sources.

Typical engineering-driven parameter selection examples:

ü Drinking water source monitoring: pH, turbidity, conductivity, residual disinfectant

ü Industrial wastewater discharge: COD, ammonia nitrogen, characteristic pollutants

ü Boiler or cooling water systems: conductivity, hardness, dissolved oxygen, pH

ü Groundwater assessment: TDS, hardness, iron, manganese, arsenic

A mature engineer will first perform system diagnostics that define the functional role and potential risks of the water body, then design a minimal yet highly effective parameter set. This ensures every data point has clear physical meaning and engineering decision value.

3. The Cost Trap of Over-Testing

Adding parameters increases not only instrument purchase costs, but also hidden costs throughout the entire lifecycle:

l Reagents and consumables:

Many parameters require dedicated reagents, standards, and digestion tubes, leading to significant ongoing expenses.

l Labor and time:

More parameters mean longer analysis times, more frequent calibration and maintenance, and increased workload for engineers.

l Data management burden:

Processing, reviewing, and reporting additional data also consumes resources.

For projects with budget constraints, such “over-testing” is unsustainable. Value engineering often shows that eliminating redundant parameters and concentrating resources on high-frequency, high-accuracy monitoring of key indicators is far more cost-effective.

4. Accuracy Over Quantity: Instrument Performance Limitations

Every analytical instrument has an optimal operating range and inherent performance limits. From an analytical chemistry and instrumentation standpoint, “all-in-one” often means “compromise.”

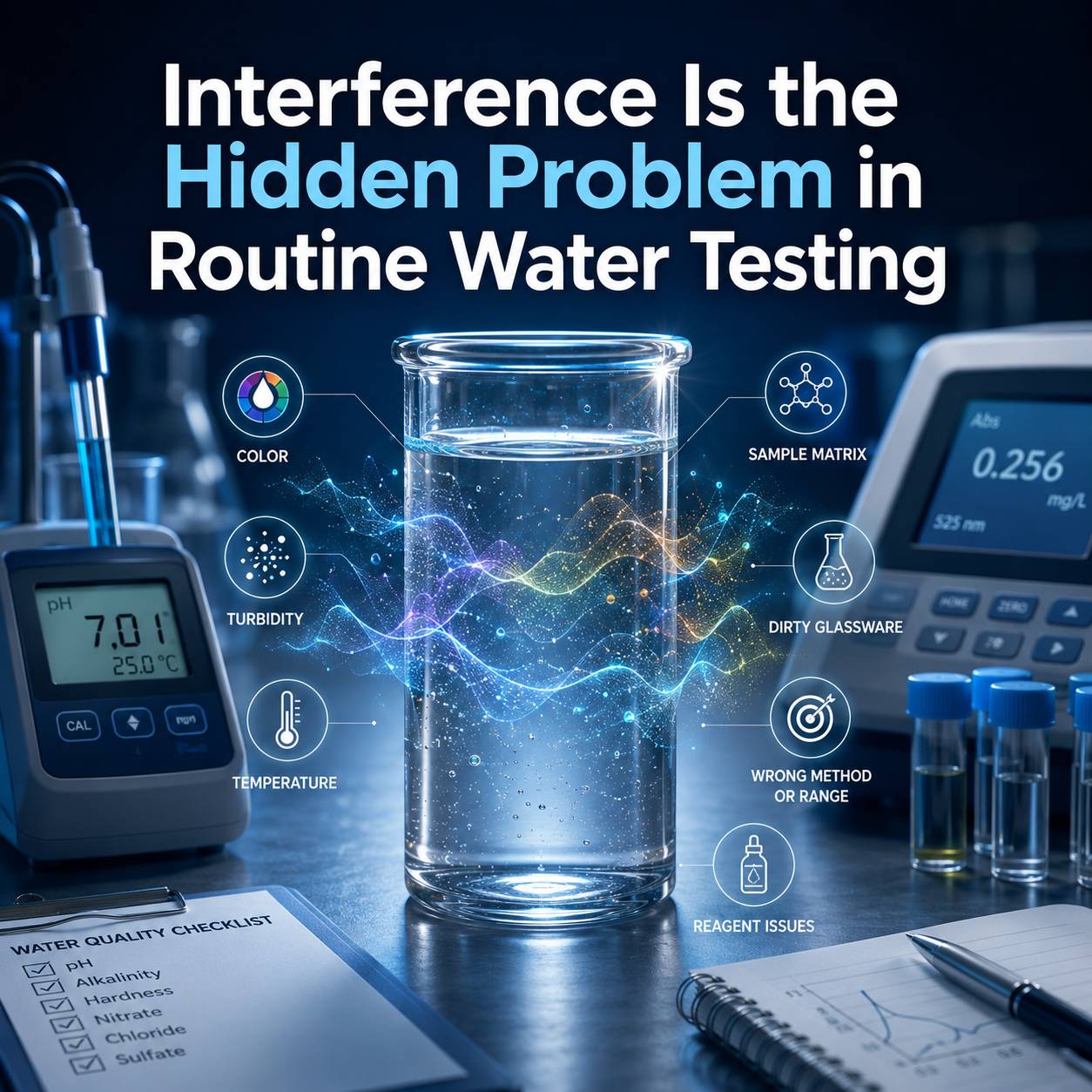

l Method interference:

Some measurement principles interfere with each other. For example, when using spectrophotometry to measure low-level total phosphorus, sample color or turbidity can introduce significant errors, requiring complex pretreatment or background correction.

l Reduced sensitivity:

To cover wide ranges, general-purpose multi-parameter instruments may sacrifice sensitivity for specific parameters. When measuring trace contaminants (e.g., ppb-level heavy metals), detection limits may be insufficient compared to dedicated single-parameter analyzers.

l Maintenance complexity:

The more sensors integrated into a system, the more likely that drift, fouling, or failure of one sensor affects overall system stability—making troubleshooting and maintenance more difficult.

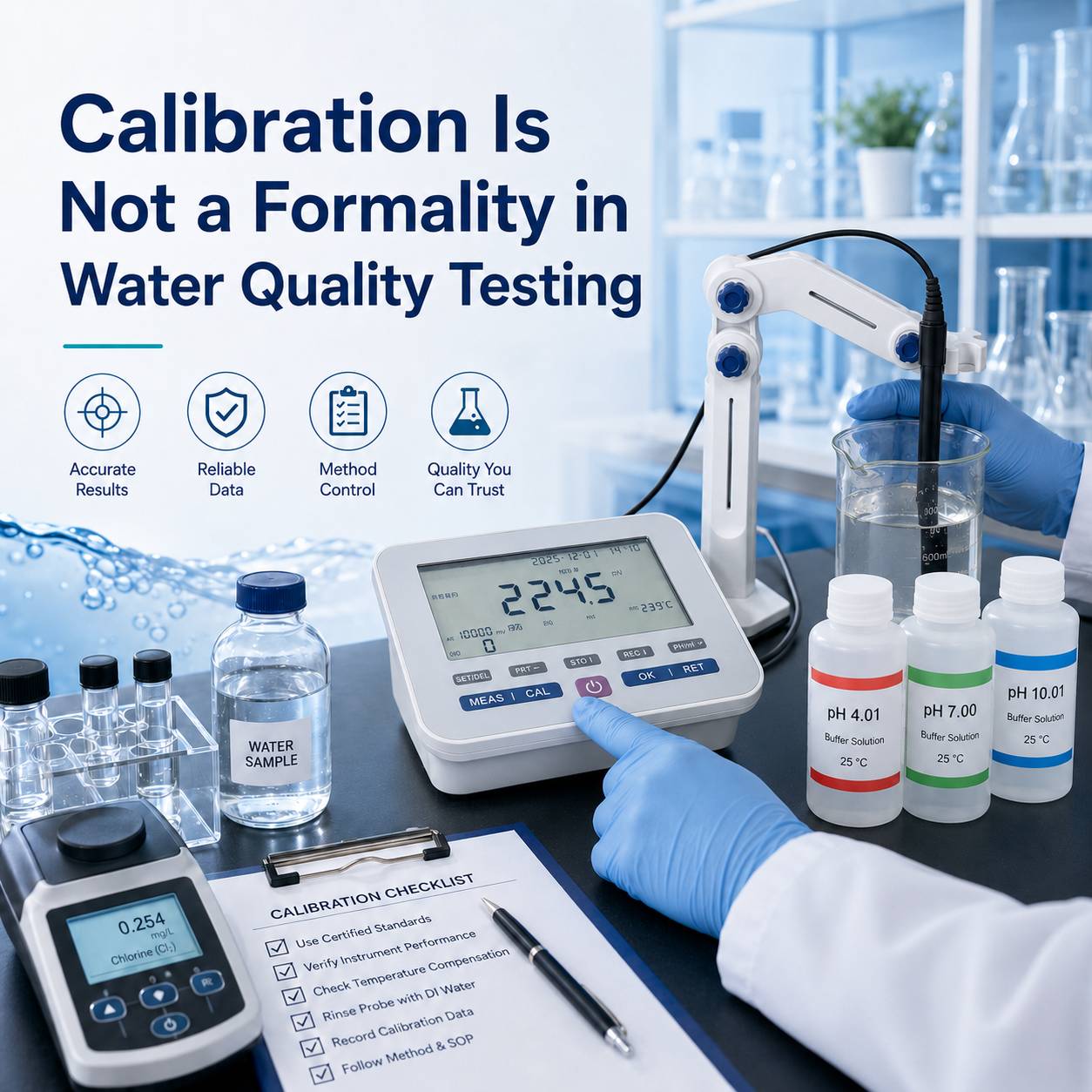

High-quality engineering practice is based on a deep understanding of measurement principles, selecting the optimal method for each critical parameter, and ensuring operation under optimal conditions. One rigorously controlled, high-precision parameter (such as pH or dissolved oxygen) is far more valuable than ten vague values obtained with low-precision, interference-prone methods.

In real-world projects, engineers often achieve better outcomes by selecting fewer parameters with dedicated or optimized instruments, rather than relying on a single “all-in-one” analyzer with compromised performance across many methods.

5. The Gap Between Data Interpretation and Engineering Decisions

The goal of water quality monitoring is action such as opening or closing valves, dosing chemicals, adjusting processes, or issuing warnings. Data is valuable only if it is interpretable and actionable.

When too many parameters are monitored, coupling relationships become highly complex, making it difficult to establish clear cause-and-effect models. For instance, monitoring 30 parameters may reveal that 20 of them are unrelated to the actual engineering problem. Instead of providing insight, they distract from identifying the primary issue.

Effective engineering data must have a high signal-to-noise ratio, where each data point clearly indicates a specific condition or process. Engineers need key indicators that can be directly used for closed-loop control or decision support—not large datasets that require extensive effort just to extract meaning.

6. Optimizing Parameter Selection: An Engineering-Oriented Testing Strategy

To improve the effectiveness of water quality monitoring, the following engineering steps are recommended:

Define objectives and boundaries:

Clearly establish why you are measuring (compliance, process control, pollution tracing) and where (source, process, discharge).Risk and critical point analysis:

Identify the top 3–5 factors with the greatest impact on system function, stability, or compliance.Select a core parameter set:

Choose parameters that directly represent these critical factors as core monitoring indicators.Validate and iterate:

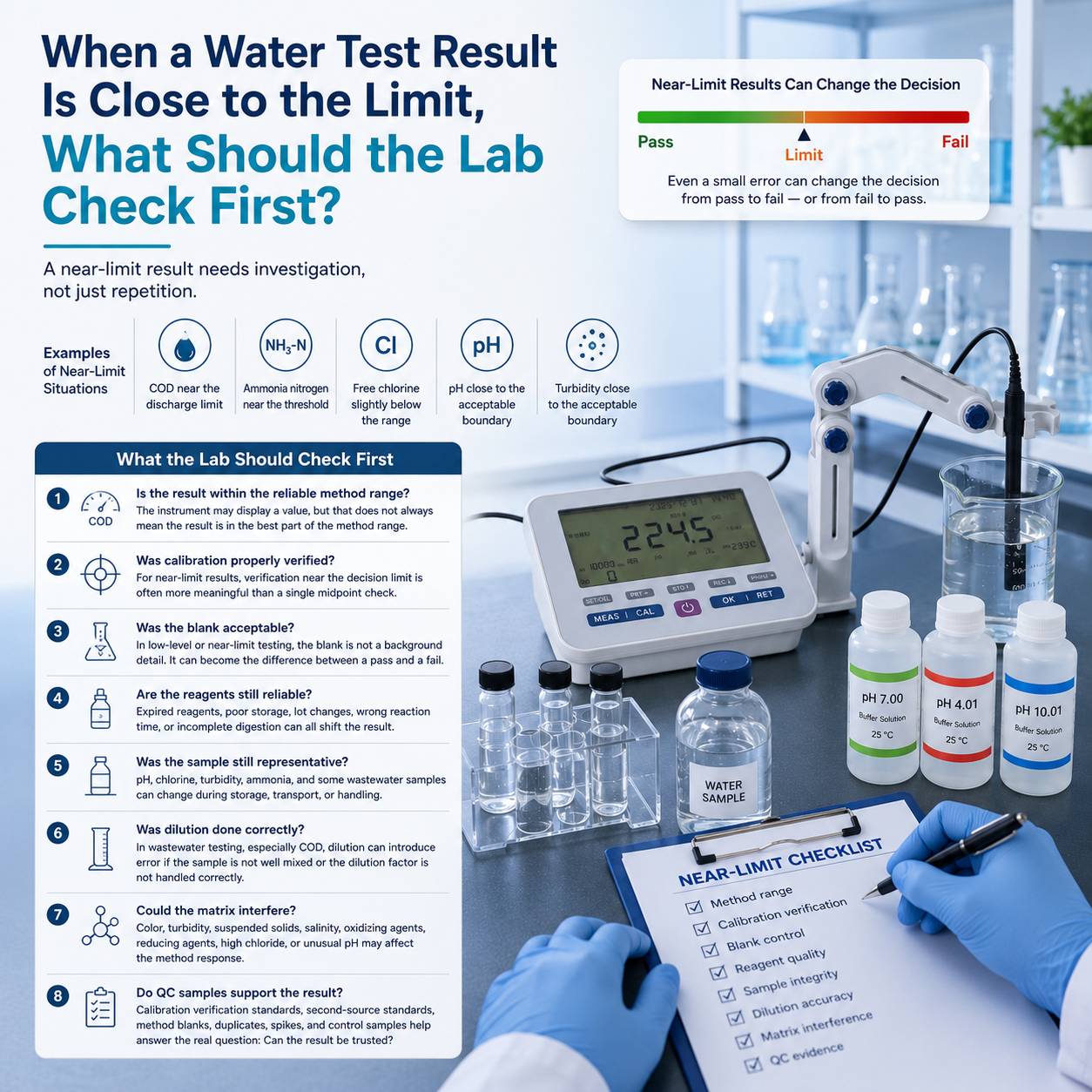

Initially include a small number of auxiliary parameters for verification. Once the data model is validated, remove redundant parameters and standardize the monitoring scheme.Quality control:

Regardless of the number of parameters, establish strict QA/QC procedures—blanks, duplicates, spike recovery, and reference materials—to ensure all reported data is accurate and reliable.

Conclusion

For engineers, water quality analysis is not about piling up parameters—it is about producing data that engineers can trust and act upon. A smaller number of carefully selected, high-precision key parameters is far more powerful than a large set of disorganized, low-value data. True professional competence lies in knowing what to measure and why, not merely how much can be measured.

By abandoning the “more is better” mindset and returning to engineering fundamentals (focusing on accuracy, relevance, and actionability), we can build water quality monitoring and management systems that are truly efficient, reliable, and economical.

If you are reviewing your current monitoring strategy, consider reframing it as a systems engineering problem: Does every parameter you measure serve a specific engineering question? Through such “streamlining” and refocusing, you may achieve more insightful data and far more reliable water quality management outcomes.

+852 46135220

+852 46135220